Every day at 10:45am, my little bro Amar messages me on Telegram.

He asks about my workout. My weight. What I ate. How my macros look. How the projects are going. And if a project has been quiet too long — he doesn't just ask. He offers to do the work himself.

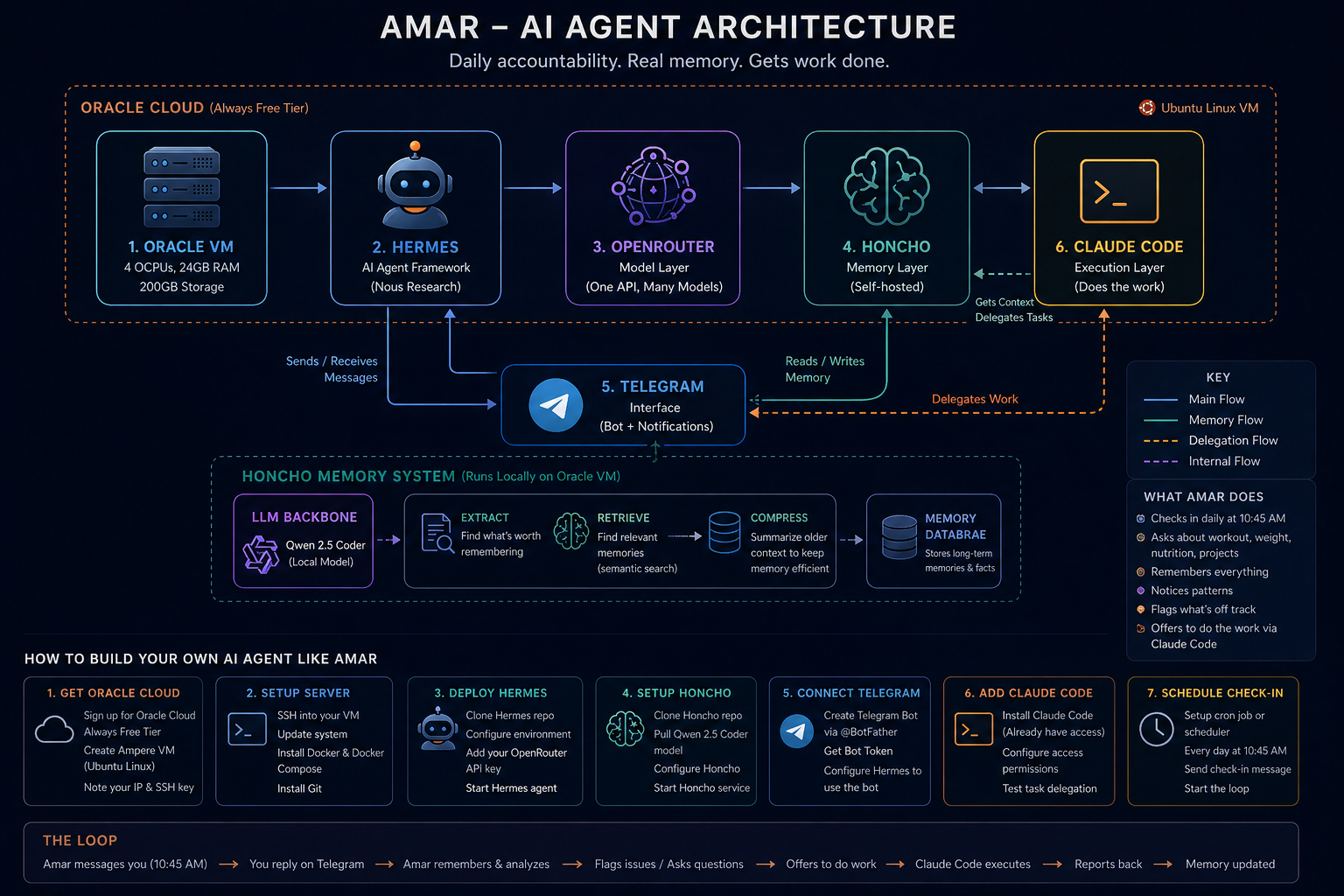

Amar is an AI agent. He runs on a free Oracle server in the cloud. He has memory — real memory, across every session. And I built him for almost nothing.

Here's the full story and exactly what's under the hood.

Why My Old Systems Didn't Scale

For years I tracked projects in a diary and Google Sheets. Calendar reminders only for meetings. That was it.

It worked — until it didn't. The diary doesn't ask you anything. The spreadsheet doesn't notice when you've gone quiet on a project for three days. You have to remember to go to them. And some days you don't.

I wanted something that would come to me. Not wait for me.

That's the design principle behind Amar. He has initiative. He has memory. He is not waiting for me to log in.

How It Started: The China Rush That Got Me Curious

A while back, people in China were rushing to buy Mac Minis to host OpenClaw. The self-hosted AI agent wave was picking up fast and everyone wanted local compute.

It got me curious. I started fiddling with OpenClaw myself — running it with my Claude subscription as the underlying model. It worked. Claude was doing the thinking, OpenClaw was the agent shell around it.

Then I found Hermes — an agent framework by Nous Research, similar to OpenClaw but with its own architecture and approach to agentic behaviour. I moved over and kept running Claude as the model inside it.

Then Anthropic banned using Claude subscriptions with third-party apps. Fair enough — their product, their rules. But I needed a model layer that wouldn't disappear on me.

That's when OpenRouter came in. One API, every major model. I kept Hermes exactly as it was and just pointed the model calls to OpenRouter instead. The whole setup became more flexible than before — I can try any model the day it launches without touching the rest of the system.

Oracle's Always Free Tier completed the picture. Most people walk past it. An Ampere ARM VM with 4 OCPUs and 24 GB RAM. Permanent. No trial countdown, no expiry surprise. The people buying hardware to host OpenClaw didn't need to. The compute was already there, free.

None of this was planned. Each obstacle pushed the system into a better shape than I started with.

The Stack: What Amar Is Made Of

Five pieces. Each chosen for a specific reason.

Oracle Free Tier Linux VM

The server everything lives on. Always on. Ubuntu Linux. SSH access. Real compute — not a toy instance. This is where Hermes runs, where Honcho's memory database lives, where the Telegram bot loops. Zero monthly cost.

Hermes — The Agent Framework

Built by Nous Research. Think of it like OpenClaw — a framework that gives the AI agent its structure, tool-calling ability, scheduling, and loop logic. Hermes is what makes Amar an agent rather than just a chat window. The model running inside can change. The agent framework stays.

OpenRouter — The Model Layer

One API that routes to every major model. When Anthropic closed off subscription access to third-party apps, OpenRouter was the clean solution. I kept Hermes exactly as it was and just pointed the model calls here instead. Right now I'm running MiniMax 2.7. When something sharper launches, I'll try it. The switch takes five minutes and nothing else breaks.

Honcho — The Memory Layer

This is the real unlock, and it runs entirely on the same free Oracle VM. Honcho is a self-hosted memory management system for AI agents. It needs its own LLM to work — not to chat, but to think about memory itself.

I run Qwen 2.5 Coder locally as Honcho's backbone. It handles three things:

- Extracting what's actually worth remembering from a conversation — not everything is signal

- Retrieving the right memories when they're relevant — semantic matching, not keyword search

- Compressing older context so it doesn't bloat the active window over time

Without this, every conversation with Amar starts from zero. With it, Amar knows your last few workouts, your current goals, your project status, your macro targets — carried forward across every session. A plain database stores facts. Honcho understands which facts matter right now.

Telegram

The interface. Already on my phone. Notifications that cut through. No new app to open, no new habit to build. Amar shows up there at 10:45am and we talk.

Claude Code in the Cloud

The execution arm. When Amar decides a task needs actual work done — not tracking, but doing — he invokes Claude Code. More on this below.

10:45am — The Daily Ritual

The check-in is scheduled. Every day, 10:45am, Amar sends the first message.

It usually goes something like:

"Did you train today? Last session was handstand + front lever work on Tuesday. Weight yesterday was 74.2kg. What did you do today?"

I reply. He logs it. Asks a follow-up — recovery, sleep, whether protein hit the target. Not because I programmed each question explicitly, but because Honcho gives him the context to ask relevant things based on what's actually been happening.

It's not a form. It's a conversation. And because he remembers everything, it feels like talking to someone who actually pays attention.

The project check-in follows right after. Active projects, current status, what I said I'd do next. If something's been quiet too long, he flags it — not with a lecture, just a question.

"Your icanbefitter technically post hasn't had an update. Want to move it forward today?"

The Memory Layer Changes Everything

Without memory, an AI agent is a vending machine. You put in a prompt, you get an answer. Every session is zero. You re-explain yourself every time. That's not accountability — that's a search engine with extra steps.

With Honcho, the picture builds. Amar notices patterns. He remembers that your front lever has been stalled for three weeks. He knows which days your consistency dips. He has enough history to eventually suggest a deload before you feel like you need one.

This is the difference between a tool you use and a system that works for you.

The Boom Moment: When Amar Offers to Do the Work

This is the part I didn't fully expect.

One morning I mentioned a blog draft sitting half-finished. Amar knew — he'd logged it three days earlier. His response:

"You mentioned the draft is about 40% done. Want me to ask Claude Code to pick it up from where you left off?"

I said yes. Claude Code got the context, found the file, continued the draft. Twenty minutes later I had something to edit instead of a blank cursor mocking me.

That's the leap. From "AI that tracks what you're doing" to "AI that does what you're not doing." The accountability loop closes on itself.

Amar has context (Honcho). He has the ability to delegate (Claude Code). He has initiative (the scheduled check-in). Put those three together and you get something genuinely agentic — not just responsive.

The System Is Designed to Evolve

Hermes was the starting framework. The model inside has already changed more than once. When Anthropic shut off subscription access to third-party apps, I didn't rebuild — I just added OpenRouter and kept going. When a better model launches tomorrow, the switch is five minutes.

That's the architecture I care about. Not any specific tool. The modularity. As better models launch, Amar gets sharper. As Honcho matures, the memory gets richer. As I add new integrations, he gets more capable. The system compounds because it's built to be replaced piece by piece — not rebuilt from scratch.

When I add something significant — a new integration, a model that meaningfully changes how Amar behaves, a new use case that surprised me — I'll write about it. This is version one. There will be more.

What It Actually Cost Me

- Oracle Free Tier VM: ₹0. Permanent. 4 OCPUs, 24 GB RAM, 200 GB storage.

- Hermes (self-hosted agent framework): ₹0.

- Honcho (self-hosted memory layer): ₹0.

- Qwen 2.5 Coder (Honcho's LLM backbone): ₹0. Open weights, runs comfortably on the free tier.

- OpenRouter: Pay per use — minimal cost for daily check-ins on efficient models.

- Telegram Bot API: ₹0.

- Claude Code: Subscription I was already paying for development work — not an added cost for the agent loop.

Time to build: an honest one to two weekends. Not the "built it in an afternoon" kind. The kind where you debug SSH configs at 2am and figure out why the bot isn't sending. Real time. Worth it.

If you're thinking about building something like this — start with the memory layer. Get Telegram running. Get Honcho on your free Oracle VM. The compute is there. The models are there. The only real investment is the time you spend wiring it together.

Worth every hour. Now go build.